Visual Studio Code Error - "There are no active source control providers"

This blog post details steps to resolve the "There are no active source control providers" in Visual Studio Code on Windows 10.

Read MoreUpdated Solutions to Classic Challenges

A personal website dedicated to helping IT professionals review where we've been, where we are, and maybe where we are headed.

This blog post details steps to resolve the "There are no active source control providers" in Visual Studio Code on Windows 10.

Read MoreIn this post, I walk through setup and install of the new Windows Server 2019 Preview build 17623. The install will take place on a Windows 10 laptop running Hyper-V.

The files can be downloaded directly from Microsoft. To download the files, register to be a part of the Insider Preview Program. Registration and Download information can be found here (https://www.microsoft.com/en-us/software-download/windowsinsiderpreviewserver).

The specific version I will install is the LTSC Preview - Build 17623. The download arrives in the form of an ISO.

Read MoreThe recent news of a new Windows Server 2019 build sent me to the Microsoft Insider Preview Download site. As it was a Microsoft app I wanted to download, I decided to use MS Edge. I normally don't use the Edge browser, but I thought it would be best for accessing MS resources. As I attempted to start the download, I hit a wall. Error 715-123130 appeared whenever I tried to download any of the product links. No matter how many times I tried or how many different tabs I used, the same error appeared.

Read More

Microsoft has recently released several cross-platform tools for managing code and databases. It's possible that these tools either would not have been developed previously, or would have been made available exclusively for Windows. But as they say, the times are changing. Microsoft's CEO Satya Nadella has promised a new Microsoft, and here it is.

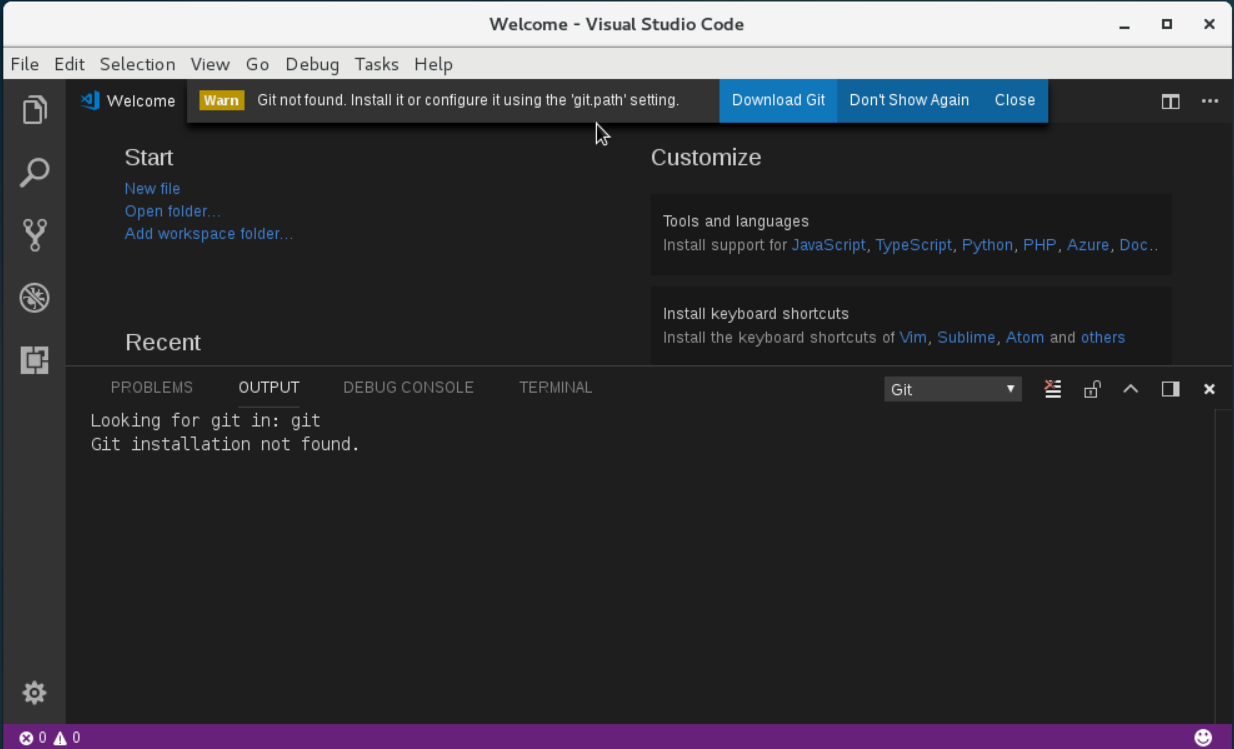

Visual Studio Code is a tool which represents this "new" Microsoft. It's open source, it's free, and it can be used on Windows, MacOS, and Linux OSes. Moreover, there is extensive context sensitive support of multiple programming languages - even non-MS languages. VS Code is a very modern editor offering built-in support of get, modular extensions, and it also offers "IntelliSense," a feature that "provides smart completions based on variable types, function definitions, and imported modules."

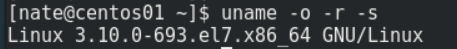

Installation of VS code on Windows is pretty straight forward, so I decided to try the process on a recent distribution of CentOS 7. Specifically, I used the CentOS OS below.

The installation Instructions for Fedora and RHEL variants can be found here: https://code.visualstudio.com/docs/setup/linux#_rhel-fedora-and-centos-based-distributions

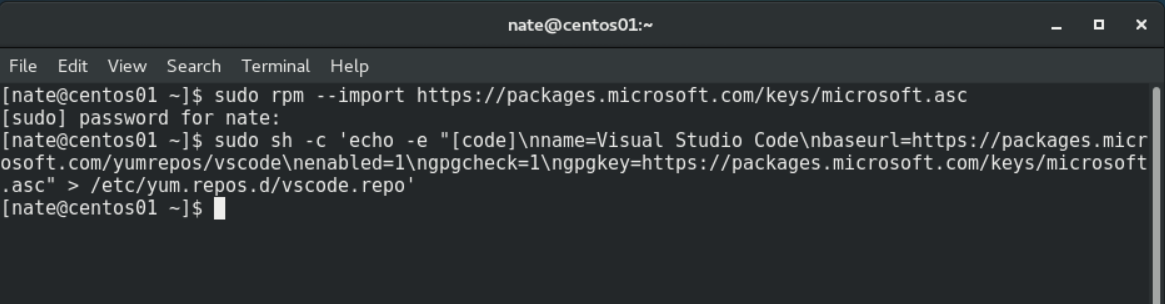

Step 1. Install the correct keys for the MS repo

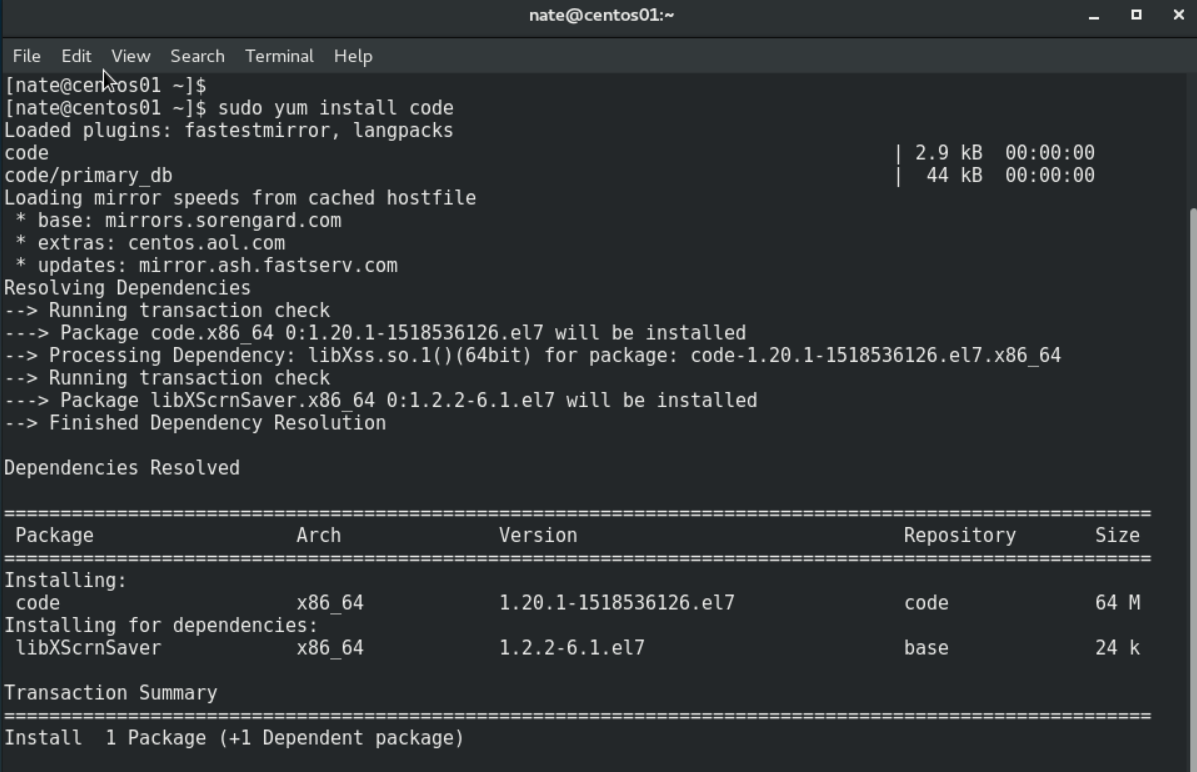

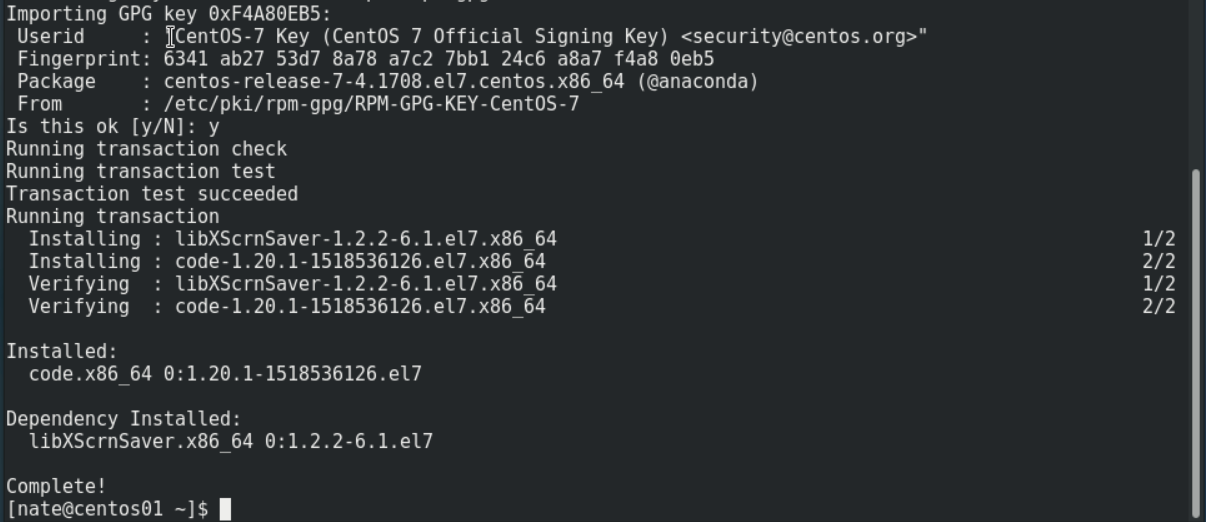

Step 2. Install VS Code. Answer "y" if there are needed dependencies.

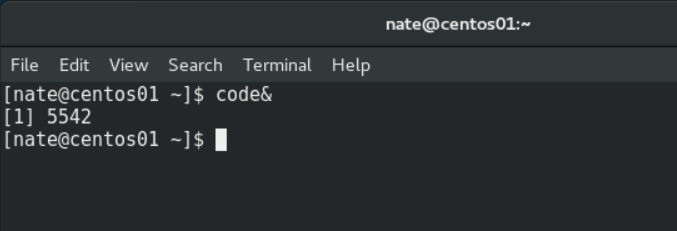

That's it! VS Code is ready to execute. It is not recommended to run VS Code with elevated permissions, so sudo is not needed. Once running, you may discover that you need to also install Git if it isn't already installed.

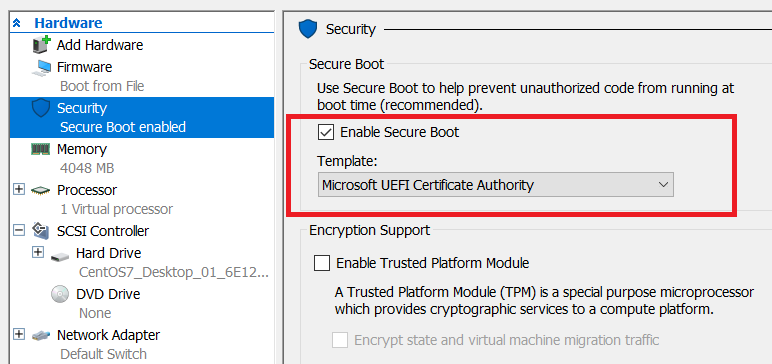

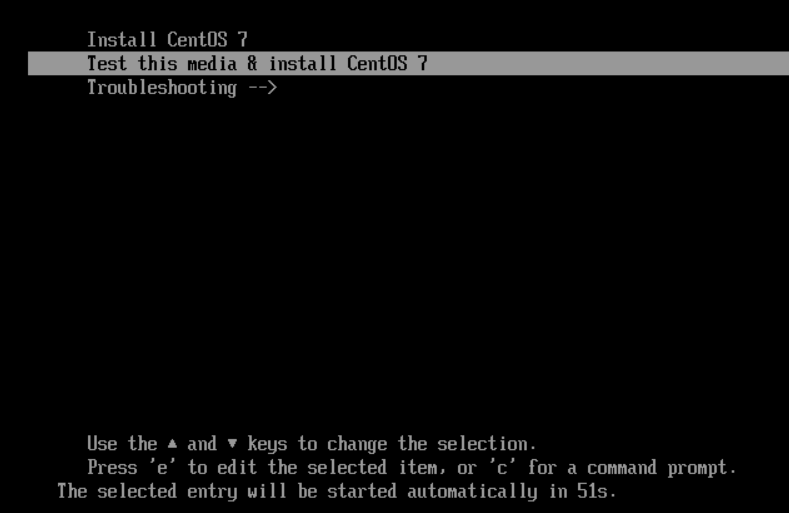

While attempting to install the latest build of CentOS on my Windows 10 laptop running Hyper-V, I hit a wall. An error was displayed telling me the hash and certificate weren't allowed.

Background: I chose to use the "Generation 2" version of they Hyper-V vm with the UEFI.

The workaroud is to disable the "Secure Boot" option in the settings screen.

Alternatively, the "Secure Boot" selection can be changed to "Microsoft UEFI Certificate Authority." This selection should work for the majority of Linux distributions according to Microsoft. In my case, it worked with the CentOS-7-x86_64-DVD-1708.iso ISO file.

I tried both options, and they both worked. I was able to install the OS as expected. The system continued to boot as normal.

So what is "Secure Boot" anyways? Microsoft describes Secure Boot as a mechanism to ensure only trusted (non tampered with) components are used. In this case, it's validating that a trusted OS is booting. The trusts appear to be maintained by certificates managed by Microsoft. Only certain OSes are registered.

More information can be found here:

Generation 2 virtual machine security settings for Hyper-V

There's a really good multi-part series of articles by John Howard. Part 6 focuses on Secure Boot. https://blogs.technet.microsoft.com/jhoward/2013/11/01/hyper-v-generation-2-virtual-machines-part-6/

I recently had an experience which underscored for me the power of AWS CloudFormation. My test lab is almost exclusively run in the cloud now. So when I need to demo things before discussing them with a customer, I build environments in AWS. One such environment was for SQL Server 2016. The original idea was to use Windows Server 2012 as the OS with SQL Server 2016 as the database platform. The customer recently decided that we should look at Windows Server 2016 as the OS instead.

I was able to adjust to the customer's request by altering two lines of code - one per EC2 instance. That's it! Just two lines of code, and I could redeploy the whole setup. The only lines that needed to be updated were the ones referencing the ImageId property. Previously, I would have built these servers in VMware workstation or Hyper-V and it would have taken a few hours. Now, it's just minutes.

Read More

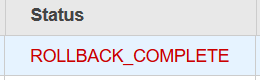

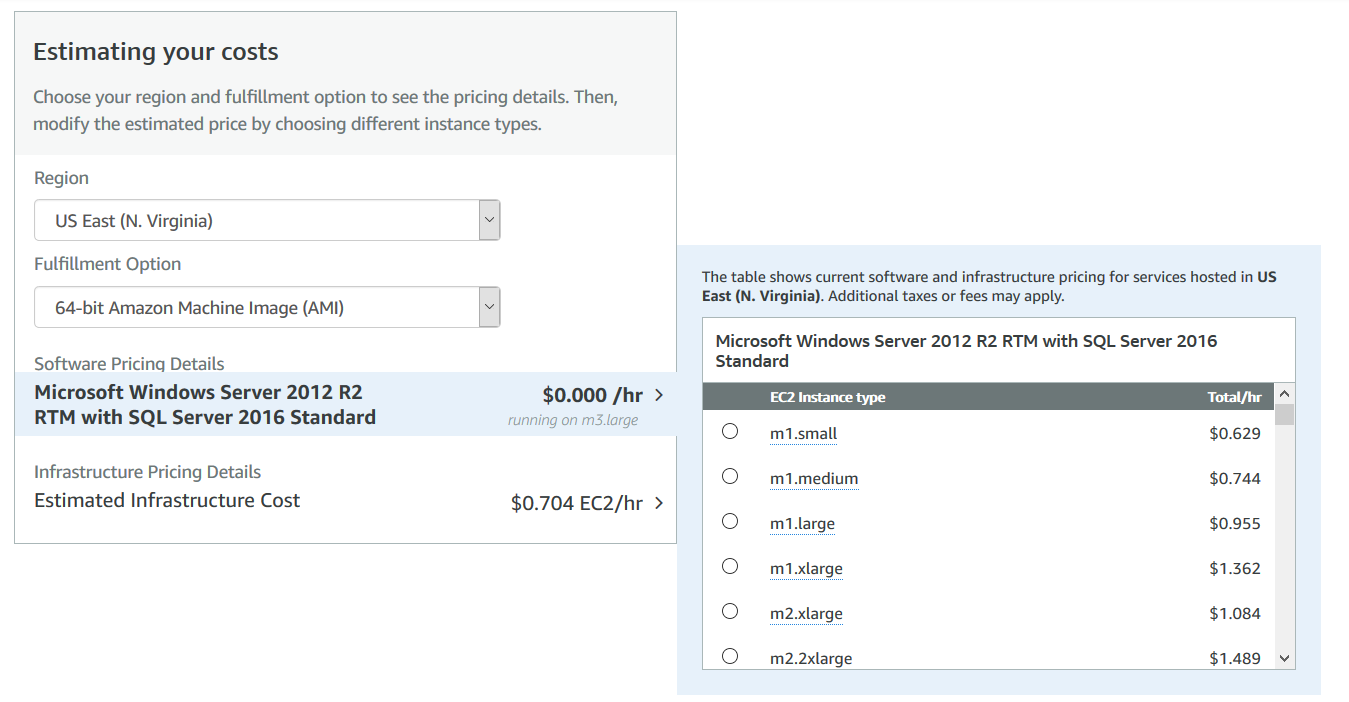

I recently created an Amazon EC2 CloudFormation template to automate the build out of a Windows Server with SQL Server pre-installed. The template came from an official Amazon/Microsoft ami in the Amazon Marketplace. Since this was for a simple proof-of-concept test, I wanted to use the t2.medium type, which I've used for various other projects. The t2.medium instance type usually provides a reasonable value in terms of price to performance. Upon execution of the CF Template, I noticed the template rolled back. When I looked for an error, it read "Microsoft SQL Server is not supported for the instance type 't2.medium'."

The error threw me for a minute, but then I ran a quick google search and it came back with a few hits. I wasn't the first person to hit this error. I found the page defining the Windows Server 2012 with SQL Server 2016 Standard Edition. That page can be found here (https://aws.amazon.com/marketplace/pp/B01H4DL45A?qid=1518460124383&sr=0-1&ref_=srh_res_product_title) . The page lists all supported instance types. My favorite, the t2.medium was not among them. I instead chose to use the m4.medium, and the template ran to completion as expected. The moral of the story - always check the documentation.

At the time of this writing, the full list of supported EC2 Instance Types for Windows Server 2012R2 with SQL Server 2016 Standard is:

m1.small

m1.medium

m1.large

m1.xlarge

m2.xlarge

m2.2xlarge

m2.4xlarge

m3.medium

m3.large

m3.xlarge

m3.2xlarge

m4.large

m4.xlarge

m4.2xlarge

m4.4xlarge

m4.10xlarge

m4.16xlarge

m5.large

m5.xlarge

m5.2xlarge

m5.4xlarge

m5.12xlarge

m5.24xlarge

c1.xlarge

c3.large

c3.xlarge

c3.2xlarge

c3.4xlarge

c3.8xlarge

c4.large

c4.xlarge

c4.2xlarge

c4.4xlarge

c4.8xlarge

c5.large

c5.xlarge

c5.2xlarge

c5.4xlarge

c5.9xlarge

c5.18xlarge

cc2.8xlarge

cg1.4xlarge

cr1.8xlarge

x1.16xlarge

x1.32xlarge

x1e.xlarge

x1e.2xlarge

x1e.4xlarge

x1e.8xlarge

x1e.16xlarge

x1e.32xlarge

r3.large

r3.xlarge

r3.2xlarge

r3.4xlarge

r3.8xlarge

r4.large

r4.xlarge

r4.2xlarge

r4.4xlarge

r4.8xlarge

r4.16xlarge

g2.2xlarge

h1.2xlarge

h1.4xlarge

h1.8xlarge

h1.16xlarge

hs1.8xlarge

i2.xlarge

i2.2xlarge

i2.4xlarge

i2.8xlarge

i3.large

i3.xlarge

i3.2xlarge

i3.4xlarge

i3.8xlarge

i3.16xlarge

DellEMC has really made it easy for current and potential customers to learn about their products. I recently began looking into Data Domain and found a free self-paced course and a free download of the Data Domain virtual appliance.

The course is called " Data Domain Fundamentals "(Course Number MR-1WP-DDFUND) and is pretty hardcore at times when it comes to speeds and feeds type of info. There are sections that discuss in great detail the model numbers, number of disks, etc. of the various devices. I recommend this course to anyone who's a server / storage admin / engineer / architect who might be taking on a Data Domain project. Application admins who use only the Data Domain Boost functionality might get overwhelmed if hardware isn't their jam.

The course estimates an hour and a half to complete, but it took me quite a bit longer. There were a few times when I went back to hear sections again, watch animations, etc. The course has excellent controls, so it's easy to jump around and go back if you think you missed something.

There's a mini exam offered towards the end of the course. From what I could tell, there's no reward nor penalty for passing or failing the quiz.

For those who learn better by doing, DellEMC makes a free Data Domain Virtual edition appliance (https://www.emc.com/products-solutions/trial-software-download/data-domain-virtual-edition.htm) available for VMware and Hyper-V virtualization platforms. There are also options to purchase the appliance in AWS and Azure.

A brief overview video of the Data Domain Virtual Edition of the can be found here (https://www.youtube.com/watch?v=xtGUQumlesA). The video is about seven minutes in length.

Today, ran across an error that I was previously unfamiliar with when running VMware workstation. The error occurred on my Windows 10 laptop with Workstation 14. The error read "VMware Workstation and Device/Credential Guard are not compatible. VMware Workstation can be run after disabling Device/Credential Guard." and was displayed as attempted to power on the VM.

A quick google search took me to a vmware KB article (https://kb.vmware.com/s/article/2146361) that lists the cause as "This issue occurs because Device Guard or Credential Guard is incompatible with Workstation." The fix, however, leads to a more general cause - VMware Workstation isn't compatible with Hyper-V. When reading through the fix, the advice starts with instructions for explicitly disabling the "device guard" feature. But then later on, the instructions state to uninstall the Hyper-V role completely. A few more google searches led me to discussion groups where people see issues running the two hypervisors on the same pc. It seems odd in 2018 that this would be an issue, but it seems to be true.

There was a time when I would have done this as I generally prefer the way Workstation handles vms and networking to hyper-v on a laptop. The problem here is that Docker for Windows 10 uses Hyper-V. So the decision isn't just VMware Workstation or Hyper-V, it's VMware workstation vs. Hyper-V AND Docker. Since I'm doing more with learning containers, I elected to not use VMware workstation.

I've been working more hands-on with AWS off and on for the past few months. I've worked on a few cool projects hosted on the AWS infrastructure. Those projects have included SQL Server 2017 containers, CloudFormation templates and working with some of the basic DevOps tools like CodeCommit. During my time working with AWS I began to see the benefits of the platform. I also decided that I should formalize my understanding through certification.

Over the years, I've certified in many different vendor's technologies. While I've tested myself with most of the major vendors, I've yet to go up against an Amazon exam. I'm still somewhat undecided which exam to take first. I've purchased books for both the AWS Certified Solutions Architect - Associate and the AWS Certified SysOps Administrator - Associate exams. SysOps is where my heart has been my entire career, yet there's more employer understanding around the Solution's Architect cert given the certification's' number of years available. Maybe I'll do both, but I'll cross that bridge later.

IT shops have evolved silos that many of us take for granted. There are server guys, hardware guys, network people, etc. this generally works as each discipline can take many years to master.

It's only when we look within the individual silos where we see problems begin to form as the silo model is often duplicated again and again. Within the server world, admins are decided into windows and UNIX / Linux admins. Networking pros can get divided into router / switch. Vs firewall admins. The sub groups become problematic as they generally align the pro by the tool they use.

Defining pros by the tools they use is dangerous and does a disservice to the IT pro. Tools quickly change in IT. The new hotness is often overtaken within a few years. Hiring a "Windows admin" is great as long as your organization runs Microsoft's OS. But what happens if something different comes along? Can your company pivot quickly to it if you've focused your staff specifically on one tech?

I've been trying to find other examples where we classify a person's occupation on the specific tool they use, and I'm stumped. People don't hire a screwdriver pro, nor a hammer technician; we hire carpenters. For IT and the professionals who work in the field to evolve, the current mentality must be questioned.

Focusing on the tools only benefit the tool companies. There's a form of lock in that occurs when we limit the horizons of the people in an occupation. At their hearts, most OSes are the same. Most network devices move packets similarly enough to one another. Storage too generally works the same from vendor to vendor. In each case there may be some significant differences in the techniques / algorithms used between vendors with any one discipline, they all have the same fundamental principles under enough layers.

IT pros would do well to keep in mind that tools change quickly and that industry longevity isn't guaranteed, but it can be made easier by understanding the core concepts and applying them to multiple vendors' offerings.

It's hard for me to believe, but it's been almost a year since my last blog post. I'm sure I stopped writing due to the holiday season or something like that. But what I found is that once I stopped, it became more and more difficult to restart. I couldn't do it. I kept telling myself that I would find a "worthy topic," whatever that meant. Then I thought I would maybe make audio or video content.

Part of the difficulty writing was due to the reception of my last post. It was my most read post by a mile. My little blog with 0 traffic suddenly had a someone reading an article. I hadn't realised how liberating it was knowing that I was mainly writing for myself. So after nearly a year, I convinced myself to write again.

To those who regularly blog, much respect. It isn't easy. It's hard work that I have far more respect for today than in the past.

The VCA6-DCV exam is a 50 question exam that tests a candidate’s primarily conceptual knowledge of VMware vSphere 6. Unlike the more technically rigorous VCP, the VCA does not require the candidate to complete a course ahead of time. A free online course is recommended, but it’s optional. The exam is offered online and can be taken on the candidate’s computer at home.

Exam Basics:

VMWare offers a 25 question practice exam with unlimited retries. Depending on the type of test taker you are, this will either help you, or give you more to worry about before the actual exam.

Candidates are required to request authorization before they can register to attempt the exam. VMware says the process can take up to 15 minutes to complete. For me, it took closer to 30 minutes. I’m not sure why there was a delay, but it appears to be a situation where your mileage may vary. I received an e-mail notifying me that the exam registration was available. I followed the link, and prepared to use the 20% exam discount offered through the VMware User Group (VMUG).

When I logged onto the Pearson VUE website, my Pre-approved exam wasn’t listed. I suspect the issue was caused by different VMware candidate IDs. There was a different candidate ID listed under my Pearson VUE account than what was listed for me in the VMware myLearn portal. At some point, I’ll see if these accounts can be merged. If you don’t see the approval in myLearn then check that the candidate ID on the VMware site matches what Pearson VUE has listed.

I entered my discount code, then appropriate billing information. The exam price was reduced to $96.00. The exam was ready to begin as soon as the payment was processed.

I completed the test with a little more than 50 minutes remaining. I held my breath as I hit the submit button, and exhaled when I saw the “Congratulations!” notice.

Candidates are forbidden from discussing questions, so I will avoid that type of talk. Anyone who’s ever taken a certification exam offered by Pearson VUE or Prometric will feel right at home with the controls which include marking answers and the ability to go back to review items. The format is multiple choice. While I can’t discuss actual questions, I recommend doing more than just casually watching the recommended course. In some cases, my previous experience with the product is what made the difference.

The exam results text says it can take up to 30 calendar days for notification from VMware. I received my notification in about 24 hours. The notification consisted of two e-mails. The first was to inform me that the transcript had been updated. The second was a congratulatory e-mail for passing the exam.

The VCA exam has been met with mixed results since it was introduced in 2013. Critics accused the test of not being technical enough to hold value. This exam was similarly light on deep technical content, but that was OK. The VCA wasn’t intended to replace the VCP. Overall, the exam succeeded at asking questions appropriate for an entry level professional, or for one whose job functions interact with virtualization admins.

VMware recently released an entry level exam for data center virtualization professionals called the VMware certified associate 6 - data center virtualization (VCA6-DCV). This certification is an update of the previous version 5 certification. The VCA-DCV based on vSphere 5 is due to be retired on November 30, 2015. VMware recommends those interested in the VCA6-DCV exam first complete a course called Data Center Virtualization Fundamentals.

I took this course as it was recommended by VMware as preparation for the exam. The course is offered online for free and can be accessed right after registering for the class. The course is available for quite a few months after signing up – helpful for those who might not be able to complete the course right away. Even if one couldn’t finish the course in the allotted time, it’s free, so one could simply sign up for a second time. The class takes a few hours to complete and can be started and continued multiple times.

The course doesn’t offer any hands on exercises. I say this up front because it communicates something about the type of course this is. This class is meant to familiarize the student with the language of VMware. There’s much here to familiarize a person with the dictionary of terms related to the various features one should know and where they fit within an enterprise. The course excels at providing basic to intermediate concepts related to vSphere 6 and providing thoughts on the applicability of those features in a typical enterprise datacenter.

There are many people who could benefit from the type of information in this course. It could be especially beneficial to those who need a high level understanding of VMware. Network, storage, and server engineers who work with and support virtualization engineers will find this helpful. It’s also good for those looking to move up in their IT career from other positions though they may need to put in time reading about the other topics outside of virtualization that are discussed. C-Level executives will also find the content appropriate as it explains many features that VMware feels differentiate them in the marketplace and make the VMware hypervisor more than a commodity datacenter technology.

The session is less helpful for experienced VMware admins / engineers who are looking for explicit how-to type of instructions, or really deep understanding of the limitations of the features and concepts presented. The objectives of the course modules are centered on the words, “describe” and “explain.” The course could be used as a form of “What’s New” learning opportunity as the cost is free.

Microsoft released a free screen recording app that's compatible with Windows 7 and 8. It's possible that the app is also compatible with Windows 10, though I haven't tried it yet. Mac users have Quicktime for free so it's not an issue on that platform.

Basic Info:

Link to the app: https://technet.microsoft.com/en-us/magazine/2009.03.utilityspotlight2.aspx?pr=blog

Format used: .wmv

Editing capabilities: No

The tool is a very basic but effective. The simple controls lead to quick to learn app.

The app is small, simple, and effective. Moreover, it's from Microsoft. That may not mean much, but when it comes to free tools, I'm very cautious of the source. If there's any drawback, it's the lack of any editing capabilities

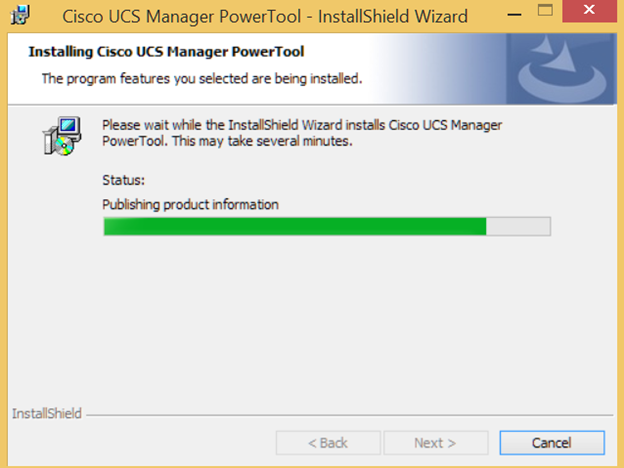

Cisco UCS Manager can be managed via PowerShell via the Cisco UCS PowerTools. The most current version is 1.5.2 as of November 5, 2015. The software is available for download via the Cisco Communities site for UCS then found under UCS Integrations. The following link will take you directly to the software: https://communities.cisco.com/docs/DOC-53838 .

Cisco provides a reference poster that aides new users just starting out. The poster can be downloaded here: https://communities.cisco.com/docs/DOC-57485

The UCS capabilities are varied and can be strung together to do some pretty cool things such as:

Backup of UCS Configuration

Checking for new firmware

Modifying boot configurations

Change power states of blades

Installation is super straight forward.

Download the tool

Run the installer

Follow the prompts

The UCS Commandlets can be accessed either via the tool itself, or through the Windows PowerShell ISE.

Future blog posts may explore sample scripts to use and modify.

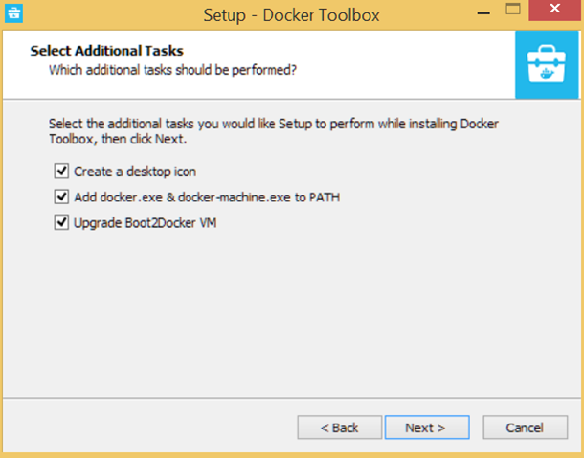

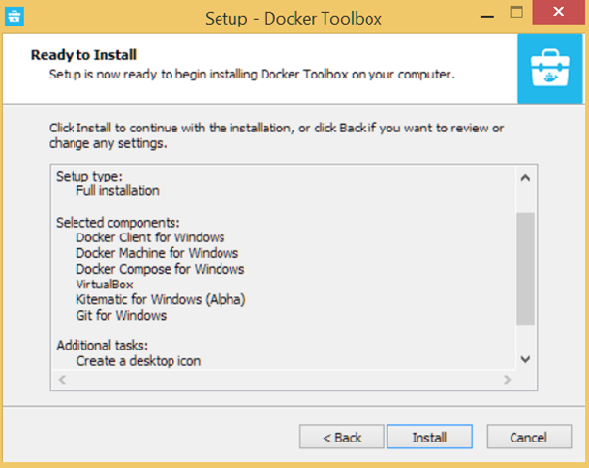

I decided to install Docker on my Windows 8.1 laptop. I’ll also install it on a server in the future and see how easy it is to move containers around. There are lots of moving parts to a full-on container infrastructure, but I’m starting w/ the most basic of building blocks – Docker itself.

The Docker website has instructions for installing the software on the three main platforms out there – Linux, Mac OS X, and Windows. I followed the Windows Instructions. At the time of this writing, version 1.9.0a is the most recent installer. http://docs.docker.com/windows/started/

The first thing that got my attention was the fact that it’s really a small ecosystem that gets installed. The parts consist of:

Docker Client for Windows

Docker Toolbox management tool and ISO

Oracle VM VirtualBox

Git MSYS-git UNIX tools

Device Driver Install for Virtual Box…

At this point the install is supposedly good to go. One difference between the Docker instructions and my install, was a missing virtual box icon on my machine. Otherwise, all seemed fine when I executed the Docker Quickstart Terminal.

… And then .. It’s ready! A quick glance at the IP shows that it’s not on the same network as my laptop. My guess is that there’s some kind of Nat Translation happening behind the scenes.

The instructions on the Docker website remind users that the command prompt for the environment is part of a bash sell, and that they aren’t in Windows anymore. It’s a nice touch and while some might pick up on it, others might not.

The instructions then direct the user to verify the installation by running the command “docker run hello-world.” As they go on to explain, it’s a simple command, but it verifies a lot of important functions:

1. The Docker client contacted the Docker daemon.

2. The Docker daemon pulled the "hello-world" image from the Docker Hub.

(Assuming it was not already locally available.)

3. The Docker daemon created a new container from that image which runs the

executable that produces the output you are currently reading.

4. The Docker daemon streamed that output to the Docker client, which sent it

to your terminal.

Beginning to end the Docker for Windows package took me 15-20 minutes to install, and I was starting with only a conceptual idea of the product. It’s surprisingly simple to setup the core software. Over the next few weeks, I’ll explore other topics such as exploring the various software images available, creating my own image(s), and moving images around between my local machine and AWS (and possibly other public cloud providers).

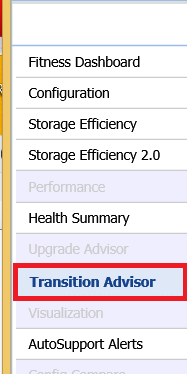

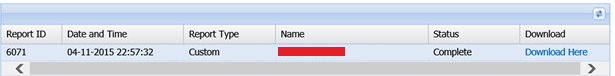

NetApp has released a Transition Advisor tool on the My AutoSupport website to help customers evaluate which of the 7-Mode features in their environment are supported in Clustered DataOntap. The tool takes the data they have on the systems and produces a PDF file that lists each feature along with a simple traffic light like legend. Green means you're good. Red means you aren't. Yellow or orange mean you need to do some research. Actually, in every case, customers should review the results along with the feature and determine how necessary it is in the environment,

Steps I used to access Transition Advisor

1. Logon to Support.Netapp.com

2. Access "My AutoSupport"

3. Select the appropriate site.

4. Select the systems to investigate in the main window

5. In the navigation pane, select "Transition Advisor"

6. In the Generate Report Tab, select the appropriate destination version, the output format (PDF or CSV), and whether or not it's an HA Pair. Then select "Submit Request."

7. On the "Download Report" tab, the status reads "In progress."

8. Once the report is complete, an e-mail is sent to the requester. The status also will show as "Complete."

The report is then available to download and read as part of an initial planning session for a transition from 7-Mode to Cluster Mode.

Microsoft recently announced via a blog post that they would discontinue unlimited storage options for Office 365 Home, Personal, and University accounts. This news has been met with near universal unhappiness. Many customers feel disappointed, and those feelings are justifiable. A major vendor with a big name and deep pockets offered a great deal then altered the terms of the agreement. Those users who were told they would have unlimited storage will be capped at 1 TB with about a year to move under 1 TB if they aren't already. While Microsoft is entirely within their rights, to many people, they will question how much they trust the public cloud.

This altering of the deal highlights some of the fears the public has with the cloud, specifically, the lack of control. The last few years have seen many vendors exclaim the virtues of cloud, and how it's supposed to free us. Laptop vendors used to the near ubiquitous nature of cloud storage vendors such as DropBox as a justification for including low capacity SSD drives. People who clung to local storage were often ridiculed as being old fashioned. Yet here we are.

I agree with many on the Internet who believe the Microsoft announcement is a really just a way for tech giant to back out of the cloud storage market. Claiming that they discontinued unlimited storage because a few people used more storage than Microsoft expected anyone to use is nuts. They offered UNLIMITED storage. Did no one there consider that people would actually put that to the test. It's extremely unlikely that Microsoft would roll out a service without considering the possibility of outliers at the top end. Microsoft has been in business for too long, and has too many smart people to drop a service because someone went to the all you can eat buffet and overstayed their welcome.

It's far more likely that they looked at their place in the market for online storage and found that they weren't making up significant enough ground on the market leaders. While cloud storage has been getting cheaper for customers, storage remains a physical thing that costs someone money. In this case, it's Microsoft. Let's assume Microsoft used the cheapest storage available today - a datacenter full of whitebox servers each crammed with high capacity drives running windows storage spaces instead of local RAID. While cost effective, it still costs money to build, house, provision, cool, and support. There had to be a sizable investment made to go after DropBox, and others, yet it's possible that they couldn't gain the traction they wanted. I don't recall seeing any numbers related to users, adoption, or mind-share related to the service - even though they offered an unlimited amount of storage.

In the end, consumers of cloud services need to remember that they renting a product. As such, they should take appropriate precautions, and be prepared for modifications to services, or worse, the complete elimination of a service whenever a product offering is no longer profitable.

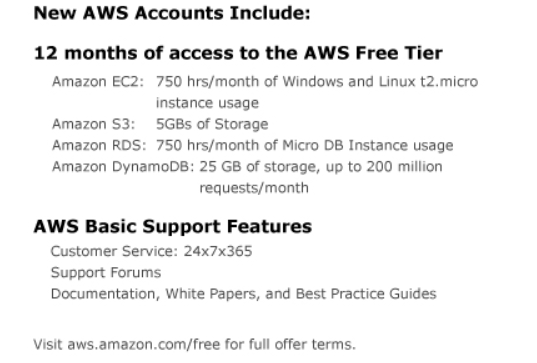

Confession time - I've never set up my own AWS instance. Ever. I've read about it, and I've even taken training for AWS; but I've never setup my own AWS anything for personal use. I've used competitive offerings such as MS Azure and Verizon's Terremark, but not AWS. I decided to take the plunge today since #vDM30in30 is about new experiences, learning, and experimentation. This post will cover my general impressions of the AWS sign up process.

So how did it go? Well, My honest impression of the setup process is that it probably could have been a little easier. I decided to select the Free tier. I was asked to sign up. The login / sign up dialogue box resembled the same screen used for buying products through Amazon, however, the same UID / pw combination didn't work. It's a new and separate account. It's a minor annoyance but definitely not a show stopper.

Next, I had to verify my tier. Amazon does a pretty good job of explaining what you get for free. The problem is in knowing if it's enough. In addition, only a small subsection of services are mentioned.

After some basic payment info, I thought I'd be done. Not so fast! Amazon does an interesting and welcome Identity verification check where an automated system calls a telephone number you provide. Upon answering the call, the applicant enters a four digit code that's provided onscreen.

AWS Identity Verification Screen

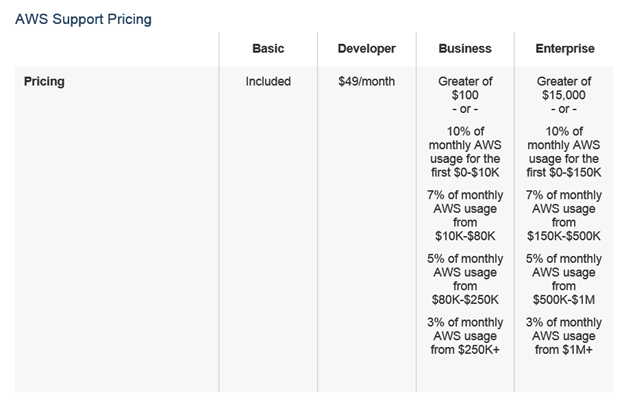

The applicant is then asked to review the support plan options. Nothing here is too surprising, but the upper tiers really provide what appears to be exceptional support. Then again for a minimum of $15,000 an enterprise should receive "white glove case handling." Also eye-catching is the fact that telephone support isn't available for anything less than $100. Basic developer level support allows for e-mailing support.

There's a lot of information, and I could see how someone buying AWS in a shadow IT ops type of situation would make a mistake by either buying too much or not enough support.

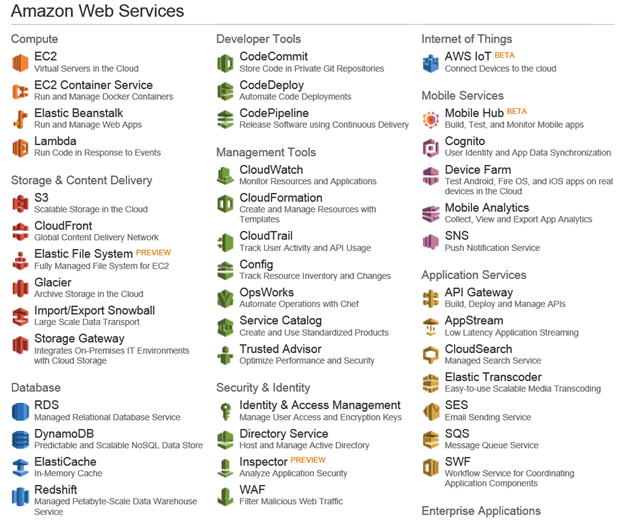

So after all of the screens and choices, I signed into the console and was overwhelmed by the choices. The vast number and types of options was intimidating. It reminded me of a friend who installed Oracle back when he was starting out in IT. Upon seeing a screen full of icons he asked, "So what do I do now?" I had a very similar feeling looking over the ocean of choices.

The last few years have been filled with warnings of Shadow Ops. This concept of non-IT departments buying and deploying cloud-based services on their own without the knowledge or consent of a centralized IT department. Based on what I just experienced, I see this trend slowing down when it comes to AWS. AWS has added tons of features. So many features, I'd argue that the complexity associated with deploying an app properly has also increased. Confronted by all of these options it seems unlikely for a less sophisticated power user to go out and deploy an app on AWS. Amazon makes sign up and payment easy, but that's not the difficult part.